can I run an llm on my phone

Asked on 2024-07-31

3 searches

Yes, you can run a large language model (LLM) on your phone. Apple provides several tools and frameworks to help you deploy and run machine learning models on their devices, including iPhones.

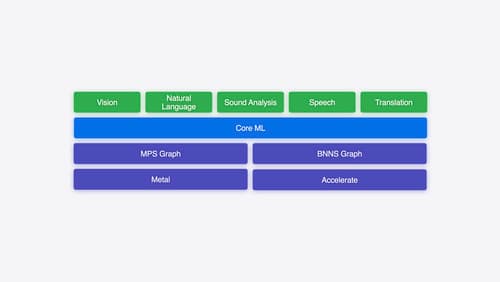

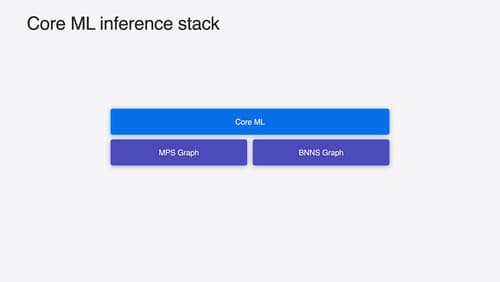

You can start with any PyTorch model and convert it into the Core ML format using Core ML tools. Core ML optimizes hardware-accelerated execution across the CPU, GPU, and neural engine, making it suitable for running a wide array of models, including large language models, on iOS devices.

For more detailed information, you can refer to the following sessions from WWDC 2024:

- Explore machine learning on Apple platforms (07:16)

- Platforms State of the Union (15:45)

- Deploy machine learning and AI models on-device with Core ML (01:07)

These sessions cover the process of converting, optimizing, and running machine learning models on Apple devices, including iPhones.

Explore machine learning on Apple platforms

Get started with an overview of machine learning frameworks on Apple platforms. Whether you’re implementing your first ML model, or an ML expert, we’ll offer guidance to help you select the right framework for your app’s needs.

Platforms State of the Union

Discover the newest advancements on Apple platforms.

Deploy machine learning and AI models on-device with Core ML

Learn new ways to optimize speed and memory performance when you convert and run machine learning and AI models through Core ML. We’ll cover new options for model representations, performance insights, execution, and model stitching which can be used together to create compelling and private on-device experiences.