How can I use Mistral on.device?

Asked on 2024-08-01

1 search

To use Mistral on-device, you can follow these steps:

-

Model Training and Preparation:

- Start by defining the model architecture and training the model using libraries such as PyTorch or TensorFlow. You can take advantage of Apple silicon and the unified memory architecture on Mac for high-performance model training.

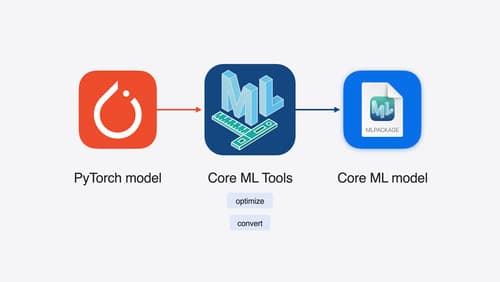

- Convert the trained model into Core ML format for deployment. During this phase, optimize the model representation and parameters to achieve great performance while maintaining good accuracy.

-

Model Conversion:

- Use Core ML tools to convert your PyTorch model into the Core ML format. Core ML tools offer various optimization techniques, such as quantization and efficient key-value caching for large language models (LLMs).

-

Integration with Apple Frameworks:

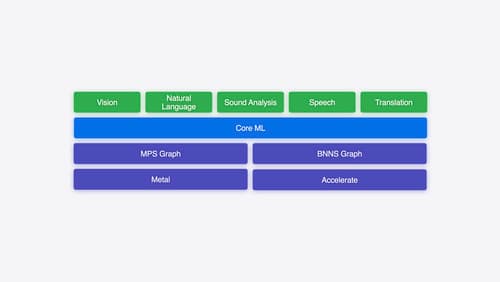

- Write code to integrate the prepared model with Apple frameworks. Use the Core ML framework to load and execute the model within your app. Core ML optimizes hardware-accelerated execution across the CPU, GPU, and neural engine.

-

Optimization and Deployment:

- Utilize new features in Core ML to improve model inference efficiency, such as using state to improve inference efficiency and adopting ML tensor to simplify model integration.

- Deploy the model on-device to create new forms of interaction, powerful professional tools, and insightful analysis while keeping personal data private and secure.

For a detailed walkthrough, you can refer to the session Explore machine learning on Apple platforms (07:32).

Relevant Sessions

- Explore machine learning on Apple platforms

- Deploy machine learning and AI models on-device with Core ML

- Bring your machine learning and AI models to Apple silicon

These sessions provide comprehensive guidance on deploying and running machine learning models, including Mistral, on Apple devices.

Bring your machine learning and AI models to Apple silicon

Learn how to optimize your machine learning and AI models to leverage the power of Apple silicon. Review model conversion workflows to prepare your models for on-device deployment. Understand model compression techniques that are compatible with Apple silicon, and at what stages in your model deployment workflow you can apply them. We’ll also explore the tradeoffs between storage size, latency, power usage and accuracy.

Platforms State of the Union

Discover the newest advancements on Apple platforms.

Explore machine learning on Apple platforms

Get started with an overview of machine learning frameworks on Apple platforms. Whether you’re implementing your first ML model, or an ML expert, we’ll offer guidance to help you select the right framework for your app’s needs.