What's new in RealityKit?

Asked on 2024-08-02

1 search

RealityKit has introduced several new features and improvements this year. Here are some of the key updates:

-

Cross-Platform Support: RealityKit 4 now supports macOS, iOS, and iPadOS in addition to visionOS, allowing developers to build for all these platforms simultaneously (Platforms State of the Union).

-

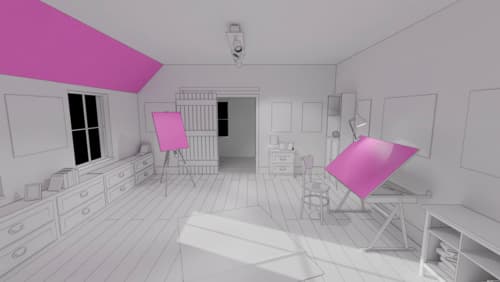

Rendering Styles: RealityKit simplifies rendering 3D models with various styles such as realistic, cel-shaded, or cartoon (Platforms State of the Union).

-

Advanced Character Animation: New APIs for blend shapes, inverse kinematics, and animation timelines enhance character animation capabilities, making interactions more dynamic and responsive (Platforms State of the Union).

-

Low-Level Access: New APIs for low-level mesh and texture access, which work with Metal compute shaders, provide improved control over app appearance and enable fully dynamic models and textures (Platforms State of the Union).

-

USD and MaterialX: RealityKit now supports USD and MaterialX, streamlining the creation of spatial experiences and enhancing compatibility with other tools and platforms (What’s new in USD and MaterialX).

-

Spatial Audio and More: Additional features include spatial audio, low-level mesh and texture construction, animation timelines in Reality Composer Pro, billboard components, pixel cast, and subdivision surfaces (Discover RealityKit APIs for iOS, macOS and visionOS).

-

Interactive 3D Content: Reality Composer Pro has added features like video docking, deployment to iOS and macOS, environment authoring, and lighting (Compose interactive 3D content in Reality Composer Pro).

For a detailed overview of these new features, you can check out the sessions:

Platforms State of the Union

Discover the newest advancements on Apple platforms.

Compose interactive 3D content in Reality Composer Pro

Discover how the Timeline view in Reality Composer Pro can bring your 3D content to life. Learn how to create an animated story in which characters and objects interact with each other and the world around them using inverse kinematics, blend shapes, and skeletal poses. We’ll also show you how to use built-in and custom actions, sequence your actions, apply triggers, and implement natural movements.

Create enhanced spatial computing experiences with ARKit

Learn how to create captivating immersive experiences with ARKit’s latest features. Explore ways to use room tracking and object tracking to further engage with your surroundings. We’ll also share how your app can react to changes in your environment’s lighting on this platform. Discover improvements in hand tracking and plane detection which can make your spatial experiences more intuitive.