How can I run llm on phone

Asked on 2024-07-30

1 search

To run a large language model (LLM) on your phone, you can follow these steps using Apple's Core ML framework:

-

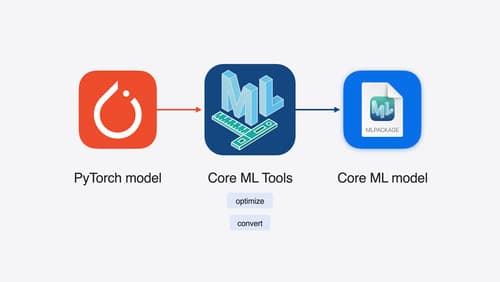

Start with a PyTorch Model: Begin with any PyTorch model that you want to run on your device.

-

Convert to Core ML Format: Use Core ML tools to convert your PyTorch model into the Core ML format. This conversion process can also include optimizations such as quantization and efficient key-value caching for LLMs.

-

Optimize the Model: Core ML tools offer various ways to optimize your model for performance, leveraging Apple Silicon's unified memory, CPU, GPU, and neural engine.

-

Integrate with Core ML: Once your model is converted and optimized, you can integrate it into your app using the Core ML framework. Core ML provides a unified API for performing on-device inference and is tightly integrated with Xcode.

-

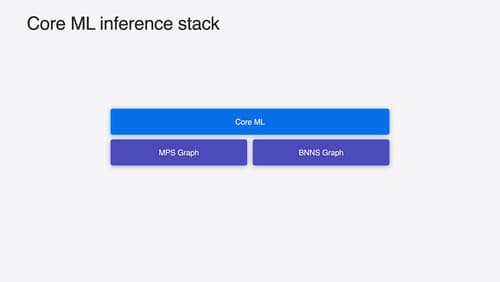

Run the Model: Core ML will handle the execution of your model across the CPU, GPU, and neural engine, ensuring efficient performance.

For more detailed guidance, you can refer to the following sessions from WWDC 2024:

- Platforms State of the Union (16:37)

- Explore machine learning on Apple platforms (07:32)

- Deploy machine learning and AI models on-device with Core ML (00:57)

These sessions provide comprehensive information on the workflow and tools available for running machine learning models on Apple devices.

Bring your machine learning and AI models to Apple silicon

Learn how to optimize your machine learning and AI models to leverage the power of Apple silicon. Review model conversion workflows to prepare your models for on-device deployment. Understand model compression techniques that are compatible with Apple silicon, and at what stages in your model deployment workflow you can apply them. We’ll also explore the tradeoffs between storage size, latency, power usage and accuracy.

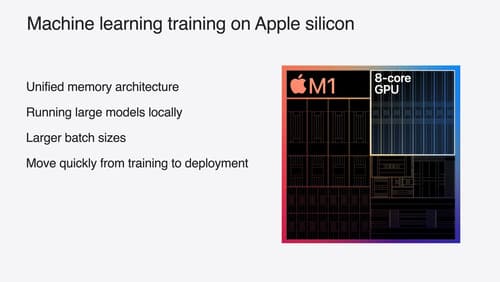

Train your machine learning and AI models on Apple GPUs

Learn how to train your models on Apple Silicon with Metal for PyTorch, JAX and TensorFlow. Take advantage of new attention operations and quantization support for improved transformer model performance on your devices.

Deploy machine learning and AI models on-device with Core ML

Learn new ways to optimize speed and memory performance when you convert and run machine learning and AI models through Core ML. We’ll cover new options for model representations, performance insights, execution, and model stitching which can be used together to create compelling and private on-device experiences.