what's new for realitykit?

Asked on 2024-08-11

1 search

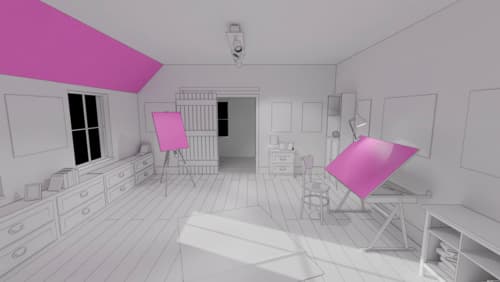

RealityKit has introduced several new features and improvements this year, as highlighted in the WWDC sessions. Here are some of the key updates:

-

Cross-Platform Alignment: RealityKit 4 is now aligned across macOS, iOS, iPadOS, and visionOS, allowing developers to build for all these platforms simultaneously. This includes support for rich materials, virtual lighting, and new APIs like blend shapes, inverse kinematics, and animation timelines, which enhance character animation capabilities (Platforms State of the Union).

-

Low-Level Access: New APIs provide low-level access to mesh and texture resources, enabling developers to construct and update these resources with more control. This is particularly useful for creating dynamic models and textures (Discover RealityKit APIs for iOS, macOS and visionOS).

-

Animation Enhancements: The animation system now supports creating animation timelines in Reality Composer Pro, and features like full-body inverse kinematics and blend shape animation have been introduced (Compose interactive 3D content in Reality Composer Pro).

-

New Components and Features: New components such as the billboard component, which ensures entities always face the user, and pixel cast, which allows for pixel-perfect entity selection, have been added. Subdivision surfaces enable rendering smooth surfaces without dense meshes (Discover RealityKit APIs for iOS, macOS and visionOS).

These updates make RealityKit a more powerful tool for creating immersive and interactive 3D and spatial experiences across Apple's platforms.

What’s new in USD and MaterialX

Explore updates to Universal Scene Description and MaterialX support on Apple platforms. Discover how these technologies provide a foundation for 3D content creation and delivery, and learn how they can help streamline your workflows for creating great spatial experiences. Learn about USD and MaterialX support in RealityKit and Storm, advancements in our system-provided tooling, and more.

Create enhanced spatial computing experiences with ARKit

Learn how to create captivating immersive experiences with ARKit’s latest features. Explore ways to use room tracking and object tracking to further engage with your surroundings. We’ll also share how your app can react to changes in your environment’s lighting on this platform. Discover improvements in hand tracking and plane detection which can make your spatial experiences more intuitive.

Platforms State of the Union

Discover the newest advancements on Apple platforms.