Can I run an LLM on my iPhone?

Asked on 2024-07-16

184 searches

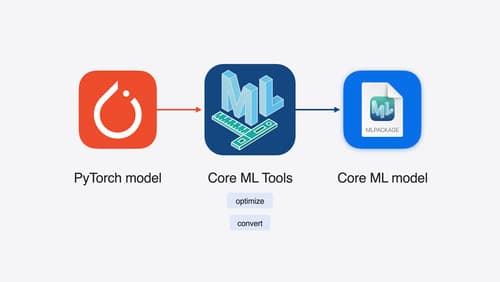

Yes, you can run a large language model (LLM) on your iPhone. Apple provides the necessary tools and frameworks to facilitate this. You can start with any PyTorch model and convert it into the Core ML format using Core ML tools. These tools offer various optimization techniques, such as quantization and efficient key-value caching in LLMs, to ensure the model runs efficiently on Apple devices. Core ML optimizes hardware-accelerated execution across the CPU, GPU, and neural engine, making it possible to run a wide array of models, including large language models, on iOS devices.

For more details, you can refer to the Platforms State of the Union session at WWDC 2024.

Bring your machine learning and AI models to Apple silicon

Learn how to optimize your machine learning and AI models to leverage the power of Apple silicon. Review model conversion workflows to prepare your models for on-device deployment. Understand model compression techniques that are compatible with Apple silicon, and at what stages in your model deployment workflow you can apply them. We’ll also explore the tradeoffs between storage size, latency, power usage and accuracy.

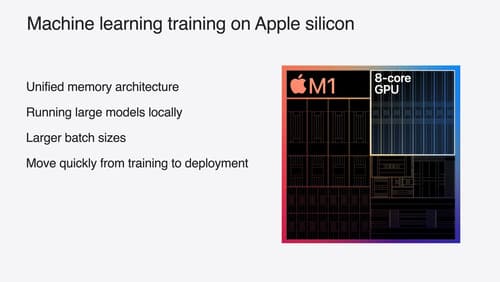

Train your machine learning and AI models on Apple GPUs

Learn how to train your models on Apple Silicon with Metal for PyTorch, JAX and TensorFlow. Take advantage of new attention operations and quantization support for improved transformer model performance on your devices.

Platforms State of the Union

Discover the newest advancements on Apple platforms.