Why can I not add a depthData connection to AVCaptureSession?

Asked on 2025-02-26

1 search

To add a depthData connection to an AVCaptureSession, you need to ensure that the device and format you are using support depth data capture. The process involves setting up an AVCaptureDevice and AVCaptureOutput that are compatible with depth data. Here are some general steps you might follow:

-

Check Device Compatibility: Ensure that the device you are using supports depth data capture. Not all devices or camera configurations support this feature.

-

Configure the Capture Session: Set up your

AVCaptureSessionwith the appropriate input and output. You need to use anAVCaptureDevicethat supports depth data and configure anAVCaptureOutputthat can handle it. -

Enable Depth Data: Once you have the correct device and format, you can enable depth data capture by configuring the session appropriately.

If you are encountering issues, it might be due to the device or format not supporting depth data, or the session not being configured correctly. You can refer to the Build compelling spatial photo and video experiences session for more details on setting up capture sessions and handling different media types.

Build compelling spatial photo and video experiences

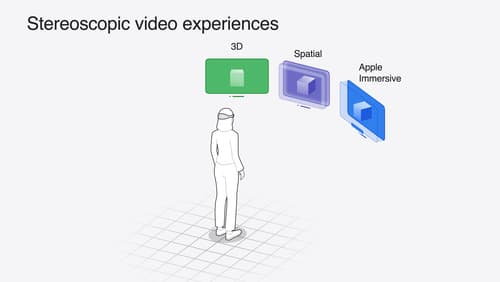

Learn how to adopt spatial photos and videos in your apps. Explore the different types of stereoscopic media and find out how to capture spatial videos in your iOS app on iPhone 15 Pro. Discover the various ways to detect and present spatial media, including the new QuickLook Preview Application API in visionOS. And take a deep dive into the metadata and stereo concepts that make a photo or video spatial.

Discover area mode for Object Capture

Discover how area mode for Object Capture enables new 3D capture possibilities on iOS by extending the functionality of Object Capture to support capture and reconstruction of an area. Learn how to optimize the quality of iOS captures using the new macOS sample app for reconstruction, and find out how to view the final results with Quick Look on Apple Vision Pro, iPhone, iPad or Mac. Learn about improvements to 3D reconstruction, including a new API that allows you to create your own custom image processing pipelines.

Bring your iOS or iPadOS game to visionOS

Discover how to transform your iOS or iPadOS game into a uniquely visionOS experience. Increase the immersion (and fun factor!) with a 3D frame or an immersive background. And invite players further into your world by adding depth to the window with stereoscopy or head tracking.