What's new in ARKit?

Asked on 2025-03-26

2 searches

At WWDC 2024, Apple introduced several exciting updates to ARKit, particularly for visionOS. Here are the key highlights:

-

Room Tracking: ARKit now includes a room tracking feature that allows apps to tailor experiences based on the room you're in. It can identify room boundaries and recognize transitions between rooms, enabling unique experiences for each space. This is covered in the Create enhanced spatial computing experiences with ARKit session.

-

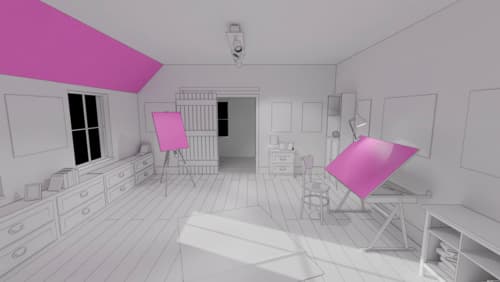

Plane Detection: A new slanted plane alignment has been introduced, which allows for detecting angled surfaces in addition to horizontal and vertical ones. This enhancement is useful for placing virtual content on various surfaces. More details can be found in the Create enhanced spatial computing experiences with ARKit session.

-

Object Tracking: ARKit can now track real-world objects that are statically placed in your environment, providing the position and orientation of these items to anchor virtual content. This feature is new to visionOS and is detailed in the Create enhanced spatial computing experiences with ARKit session.

-

Hand Tracking: Improvements have been made to hand tracking, offering options for continuous or predicted hand tracking, depending on the needs of your app. This is particularly useful for gesture detection and attaching content to hands. Learn more in the Create enhanced spatial computing experiences with ARKit session.

These updates are designed to enhance spatial computing experiences, allowing developers to create more immersive and interactive applications.

Discover RealityKit APIs for iOS, macOS and visionOS

Learn how new cross-platform APIs in RealityKit can help you build immersive apps for iOS, macOS, and visionOS. Check out the new hover effects, lights and shadows, and portal crossing features, and view them in action through real examples.

Discover area mode for Object Capture

Discover how area mode for Object Capture enables new 3D capture possibilities on iOS by extending the functionality of Object Capture to support capture and reconstruction of an area. Learn how to optimize the quality of iOS captures using the new macOS sample app for reconstruction, and find out how to view the final results with Quick Look on Apple Vision Pro, iPhone, iPad or Mac. Learn about improvements to 3D reconstruction, including a new API that allows you to create your own custom image processing pipelines.

Create enhanced spatial computing experiences with ARKit

Learn how to create captivating immersive experiences with ARKit’s latest features. Explore ways to use room tracking and object tracking to further engage with your surroundings. We’ll also share how your app can react to changes in your environment’s lighting on this platform. Discover improvements in hand tracking and plane detection which can make your spatial experiences more intuitive.